Discover how artificial intelligence in nursing practice works step by step. A Ghana RN with 10+ years and systems engineering expertise explains AI tools every nurse must know.

| Key Takeaways |

| ✓ Artificial intelligence in nursing practice ranges from predictive analytics and clinical decision support to robotic process automation and AI-powered documentation tools. |

| ✓ AI does NOT replace nurses — it amplifies clinical judgment, reduces administrative burden, and flags patient deterioration earlier. |

| ✓ Understanding how AI systems work helps nurses use, troubleshoot, and advocate for these tools effectively at the bedside. |

| ✓ Patient safety must remain the central concern when adopting any AI tool — always apply critical nursing judgment alongside AI outputs. |

| ✓ Nurses in resource-limited settings like Ghana can leverage AI on mobile platforms, reducing the hardware barrier to entry. |

| ✓ The Nurses and Midwifery Council (NMC) Ghana and global bodies such as the WHO emphasise the nurse’s professional accountability even when AI assists clinical decisions. |

| ✓ Developing basic digital literacy, data literacy, and AI ethics knowledge is now a core competency for 21st-century nurses. |

Table of Contents

Introduction: The Algorithm That Could Have Saved the Transfer Decision

It was 2:17 a.m. on a Tuesday when a 47-year-old hypertensive patient arrived in our Emergency Room at a district-level facility under the Ghana Health Service. His vital signs looked borderline stable — or so they seemed. Heart rate 102, blood pressure 138/90, mild shortness of breath, which he attributed to ‘rushing from home.’ My gut said something was off, but I had three other critical patients that night. We stabilised him, monitored, and by 4 a.m., he had gone into early pulmonary oedema. We transferred him, and he survived — but barely.

I have thought about that night many times. Today, an AI-powered early warning score system would have flagged that patient’s risk trajectory within the first 30 minutes of admission. It would have cross-referenced his vital-sign trend, medication history, and demographic risk factors and pushed an alert to my screen. That is not science fiction. That is clinical AI — already running in hospitals across the UK, USA, and parts of sub-Saharan Africa.

My name is Abdul-Muumin Wedraogo. I am a Registered General Nurse with over 10 years of clinical experience across Emergency Room, Pediatric, ICU, and General Ward settings in the Ghana Health Service. I hold a BSc in Nursing from Valley View University, a Diploma in Network Engineering, and an Advanced Professional in System Engineering from OpenLabs and IPMC Ghana. I am a registered member of both the Nurses and Midwifery Council (NMC) Ghana and the Ghana Registered Nurses and Midwives Association (GRNMA).

That dual background — bedside nursing and systems architecture — gives me an unusual vantage point. I understand what nurses need from technology, and I also understand how that technology actually works under the hood. This guide is for every nurse who has heard the words ‘artificial intelligence’ in a staff meeting and felt either excited, confused, or quietly threatened.

By the end of this article, you will understand what artificial intelligence in nursing practice means at a practical level, how to use AI tools safely in your workflow, the ethical and professional obligations that come with AI adoption, and how nurses in settings like ours — where resources are stretched — can still benefit from these advances. Let us begin.

1. What Is Artificial Intelligence? A Nurse-Friendly Foundation

1.1 Defining AI Without the Jargon

Artificial intelligence refers to computer systems designed to perform tasks that normally require human intelligence — things like recognising patterns, making decisions, interpreting language, or predicting outcomes (Topol, 2019). That is the simplest, honest definition.

Think of it this way: when you read a patient’s chart and your experienced brain automatically spots that the combination of rising lactate, falling blood pressure, and elevated temperature suggests sepsis, you are doing pattern recognition. An AI algorithm does the same thing, except it has been trained on millions of patient records instead of a decade of clinical shifts.

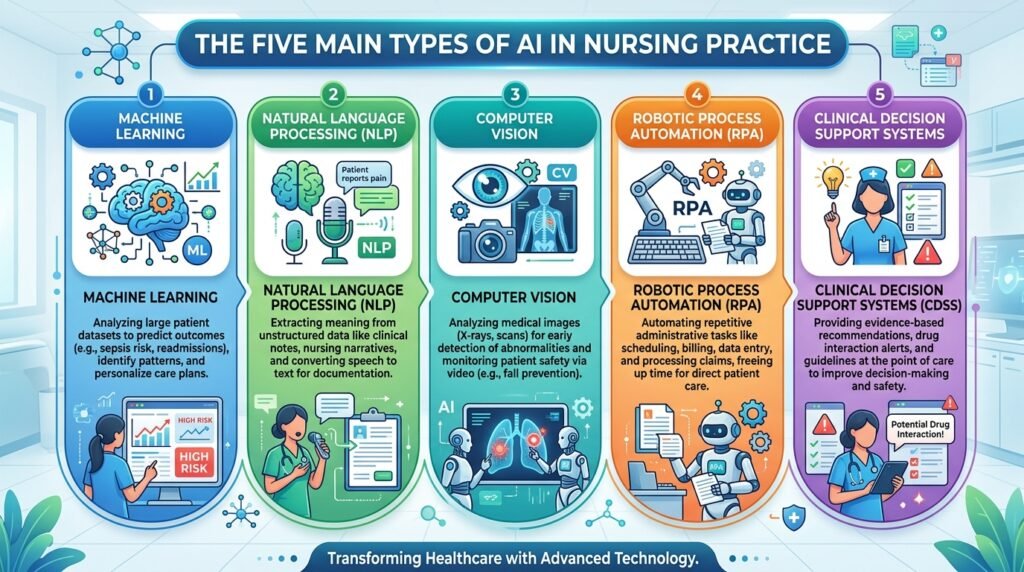

There are several major types of AI relevant to nursing practice:

- Machine Learning (ML): Algorithms that learn from data without being explicitly programmed for each outcome. Example: sepsis prediction tools.

- Natural Language Processing (NLP): AI that understands and generates human language. Example: AI-powered documentation assistants that transcribe nursing notes.

- Computer Vision: AI that interprets images. Example: wound assessment tools that analyse photographs to track healing.

- Robotic Process Automation (RPA): AI that automates repetitive digital tasks. Example: auto-population of patient discharge summaries.

- Clinical Decision Support Systems (CDSS): AI that presents evidence-based recommendations at the point of care. Example: drug-dose calculators with allergy alerts.

1.2 A Systems Engineer’s Explanation of How AI Actually Works

With my background in network and system engineering, I want to give you a slightly deeper look — because understanding the mechanism makes you a smarter, safer user of the tool.

At its core, a machine learning model is a mathematical function that maps inputs to outputs. During training, the model is shown thousands or millions of examples (e.g., ‘patient with these 12 vital-sign patterns developed sepsis within 6 hours — true’). It adjusts its internal parameters — billions of numbers — to get better at predicting the correct output. Think of it like tuning an IV drip rate: you watch the patient’s response and keep adjusting until you hit the right flow.

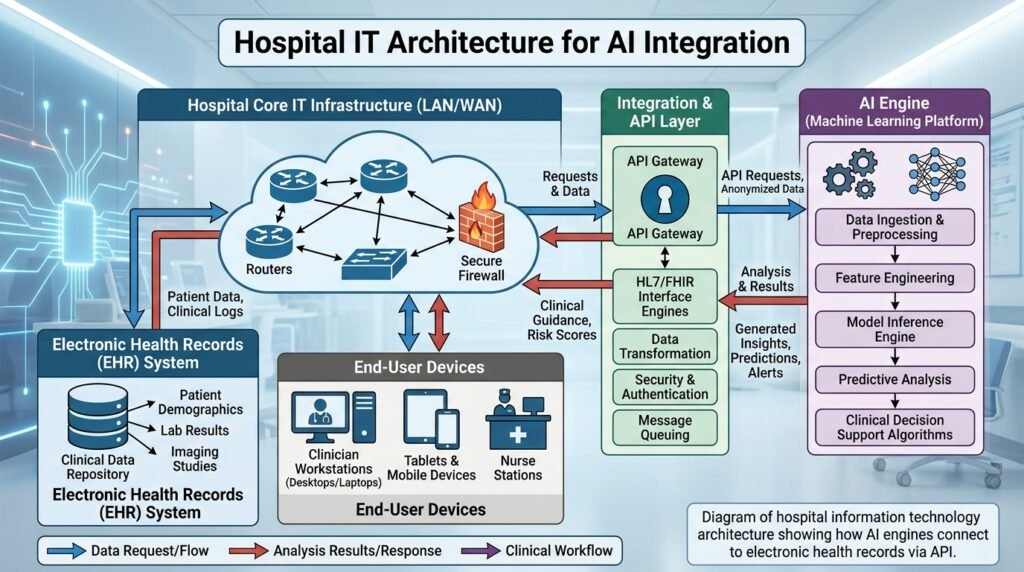

Once trained, the model is deployed. In a hospital system, it connects to the Electronic Health Record (EHR) database via an Application Programming Interface (API) — essentially a communication bridge between two software systems. The model reads real-time patient data, runs its calculations in milliseconds, and sends an alert or recommendation back to the nurse’s screen.

This is why connectivity matters. In my experience working in facilities where the local area network (LAN) had intermittent failures, even excellent AI tools become unreliable. I will address troubleshooting and infrastructure considerations in the Technical Deep-Dive section.

2. How AI Is Being Used in Nursing Practice Today: Clinical Application

2.1 Early Warning and Deterioration Detection

One of the most powerful and well-evidenced applications of AI in nursing is early warning systems (EWS). Traditional NEWS (National Early Warning Score) systems rely on nurses entering vital signs and calculating a score manually. AI-enhanced EWS take this further by analysing trends, not just single readings, and by incorporating variables such as laboratory results, medication history, and even nurse-documented observations.

The Epic Deterioration Index, used in many US hospitals, demonstrated a 44.8% reduction in unexpected ICU transfers when integrated into nurse workflows (Churpek et al., 2020). In our ICU at the Ghana Health Service, we do not yet have such a system in place, but mobile-based early warning apps such as those built on the WHO SMART guidelines are already available and usable on standard Android devices.

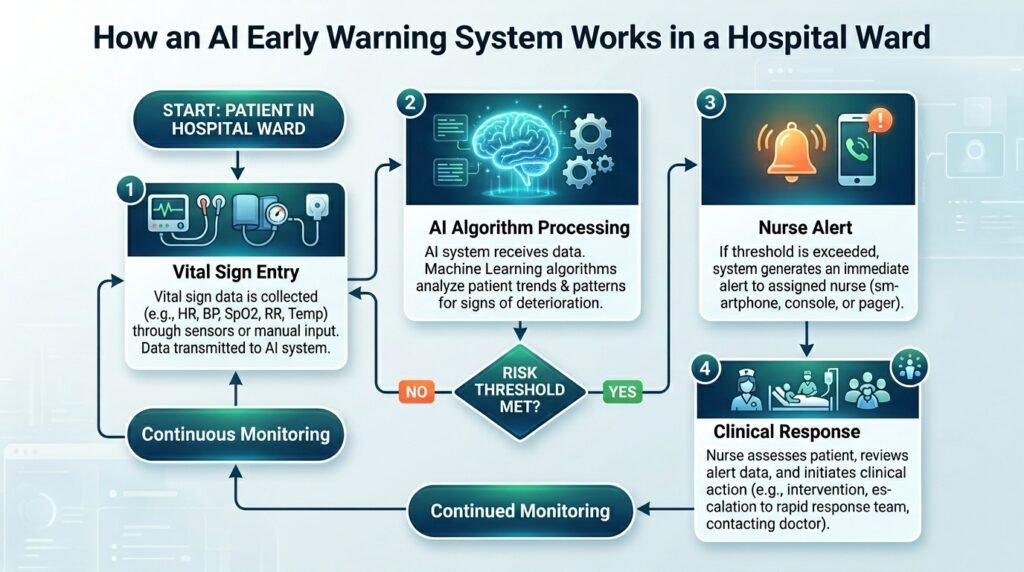

Step-by-step, here is how a nurse interacts with an AI-powered early warning system:

- Document vital signs in the EHR or mobile app as you normally would.

- The AI engine reads your entries in real time, compares them to the patient’s baseline, and analyses the trend over the past 4–6 hours.

- If the algorithm detects a concerning trajectory, it generates an alert — colour-coded by severity.

- The alert pops up on your screen (or sends an SMS in mobile-first setups) with a summary: ‘Patient deterioration risk HIGH. Consider urgent medical review.’

- You apply your clinical judgment: Does this alert align with what you are seeing at the bedside? If yes, escalate. If not, document your assessment and rationale.

- Your response — or non-response — is logged and feeds back into the AI system over time, improving its calibration.

Key point: Step 5 is the most important. The AI presents a probability. You make the clinical decision. Never bypass your assessment in favour of an algorithm.

2.2 AI in Medication Management and Drug Safety

Medication errors remain one of the leading causes of preventable harm in healthcare globally. The WHO’s Medication Without Harm initiative identified medication errors as responsible for approximately 1.3 million injuries annually in the United States alone (WHO, 2022a). AI is dramatically improving safety in this domain.

Computerised Physician Order Entry (CPOE) systems with embedded AI check for drug interactions, weight-based dosing errors, contraindications with existing diagnoses, and allergy conflicts — in real time. As the nurse administering the medication, you interact with this AI every time the system flags a warning.

In my experience in pediatrics, where weight-based dosing is critical, and a decimal-point error can be fatal, these AI-assisted safety checks are not optional luxuries — they are lifesavers. I recall a close call involving a tenfold paediatric dosing calculation error that was caught by the system before administration. That alert saved a child’s life.

2.3 AI-Powered Documentation and NLP Tools

Documentation is one of the most time-consuming — and often resented — aspects of nursing. A 2021 study in the Journal of the American Medical Informatics Association found that nurses spend up to 35% of their shift time on documentation (Arndt et al., 2021). AI-powered natural language processing tools are changing this.

Ambient clinical intelligence (ACI) tools like Nuance DAX and similar platforms allow nurses to speak naturally about a patient encounter — ‘Patient reports 7/10 pain in left lower quadrant, no rebound tenderness, bowel sounds present’ — and the AI transcribes, structures, and maps the information into the correct EHR fields automatically.

For nurses in Ghana and similar settings, voice-to-text tools available via standard smartphones (even without specialised clinical AI software) are already reducing documentation burden. Google’s speech recognition, integrated into tools like Google Docs, can serve as a basic documentation aid where formal NLP tools are unavailable.

2.4 AI in Patient Monitoring: Wearables and Remote Sensing

Wearable devices equipped with AI analytics are transforming how we monitor patients — both in hospital and at home. Devices like smartwatches can now continuously track heart rate, oxygen saturation, respiratory rate, and even detect atrial fibrillation with sensitivity and specificity comparable to clinical ECG in some studies (Hannun et al., 2019).

In ICU settings, AI-integrated monitoring systems analyse waveform data from multiple bedside monitors simultaneously, correlating patterns that no human observer could track continuously. These systems flag potential arrhythmias, respiratory deterioration, and haemodynamic instability before they become emergencies.

As nurses, our role shifts from ‘constant watcher’ to ‘intelligent responder’ — we trust the AI to catch things we would miss during a busy shift, while we focus our cognitive resources on assessment, communication, and care planning.

2.5 AI in Wound Care and Diagnostics Support

AI-powered image recognition tools can now assess wound photographs to track healing trajectories, classify wound types, and recommend treatment modifications. Companies like Tissue Analytics provide platforms where a nurse photographs a wound with a smartphone, the AI measures dimensions, identifies tissue types, and tracks progress over time — automatically generating documentation.

In dermatology support, AI tools have demonstrated diagnostic accuracy for certain skin conditions exceeding that of general practitioners (Esteva et al., 2017). Nurses in remote or resource-limited settings can use these tools as decision support when specialist review is unavailable.

3. Safety and Risk Management: What Every Nurse Must Know

3.1 Understanding AI Limitations — The Dangers of Overreliance

AI systems are powerful, but they have real limitations that nurses must understand and communicate to colleagues and patients. Here are the critical ones:

- Training Data Bias: An AI trained predominantly on data from high-income Western populations may perform poorly on Ghanaian patients with different disease presentations, comorbidity profiles, and genetic factors. Always question whether the tool was validated in a population similar to yours.

- The ‘Black Box’ Problem: Many AI systems — particularly deep learning models — cannot explain their recommendations in human-understandable terms. This makes it difficult to evaluate why the algorithm flagged something. In nursing practice, you must be able to apply clinical reasoning independent of the AI output.

- Alert Fatigue: Poorly calibrated AI systems generate too many false-positive alerts. In my ER experience, when alarms fire constantly and are frequently wrong, nurses begin to ignore them — including the ones that matter. This is one of the most dangerous side effects of poorly implemented AI.

- Connectivity and System Downtime: AI tools require a reliable network infrastructure. I have worked through periods of EHR outages, and the lesson is always the same: maintain manual backup competencies. Never allow AI dependency to erode fundamental clinical skills.

- Data Entry Quality: Garbage in, garbage out. If you enter incorrect vital signs, the AI produces incorrect risk scores. Your documentation accuracy directly determines AI performance.

| Safety Warning |

| ⚠ Never override your clinical assessment in deference to an AI recommendation. The algorithm does not know your patient’s eyes, their breathing pattern, or the concern in a family member’s voice. |

| ⚠ Always document your reasoning when you disagree with an AI alert — and your reasoning when you agree. Professional accountability remains with the nurse. |

| ⚠ Report AI errors to your unit manager and, where available, the hospital’s clinical informatics or IT team. Feedback loops are essential for AI improvement and patient safety. |

3.2 Personal Observations from Frontline Practice

During my time in the ICU, I observed a period when a new clinical decision support system was deployed without adequate nurse training. Alerts were firing constantly — some meaningful, many spurious. Within two weeks, experienced nurses were dismissing alerts almost reflexively. One alert — a genuine haemoglobin drop indicating occult bleeding — was dismissed as ‘another false alarm’ for 45 minutes before the nurse re-assessed and caught it.

The lesson I took from that experience: technology without education is more dangerous than no technology at all. When your facility introduces AI tools, demand proper training. If training is inadequate, advocate loudly through your GRNMA channels and nursing leadership.

4. Technical Deep-Dive: How AI Systems Connect to Hospital Infrastructure

4.1 The Hospital Technology Stack — A Nurse’s Map

Understanding the basic architecture of hospital IT helps you troubleshoot AI tool failures intelligently. Here is a simplified map:

- Electronic Health Record (EHR) — the central database of patient information. Think of it as the patient’s digital chart folder.

- Hospital Information System (HIS) — broader system managing admissions, discharges, billing, and departmental workflows.

- Local Area Network (LAN) / Wi-Fi — the plumbing that connects devices to servers. If the LAN is down, AI tools lose their data feed.

- AI Engine / Analytics Server — the computing brain that runs the models. Often hosted on-site or in the cloud.

- API Layer — the translator that lets the EHR talk to the AI engine. When you see an error message like ‘service unavailable,’ the API is usually the problem.

- End-User Devices — tablets, desktop computers, smartphones — the screens you and your colleagues use to receive AI outputs.

With my network engineering background, I want to make one critical point: latency kills AI clinical usefulness. If the network between your ward terminal and the AI server is slow, alerts arrive late. I have seen facilities where beautiful AI sepsis tools deployed on aged switches with 40% packet loss were practically useless. Advocate for modern network infrastructure as passionately as you advocate for adequate PPE.

4.2 Troubleshooting Common AI Tool Issues at the Bedside

- Tool not loading or displaying data: Check your network connection. Is the Wi-Fi icon showing a signal? Can you access other web-based resources? If not, it is likely a network issue — report to IT immediately.

- Alerts not firing when you expect them: Check that patient data is fully entered and saved. AI algorithms can only analyse data they can access. Incomplete documentation is a common cause.

- Alert scores seem inconsistent with the clinical picture: Cross-check your vital sign entries for accuracy. If entries are correct, document the discrepancy and report it — this is how model calibration errors get identified.

- System down during a critical moment: Revert to manual protocols. Know your unit’s paper-based early warning score sheet. AI tools are aids, not replacements for core clinical competencies.

4.3 AI in Resource-Limited Settings: Making It Work in Ghana

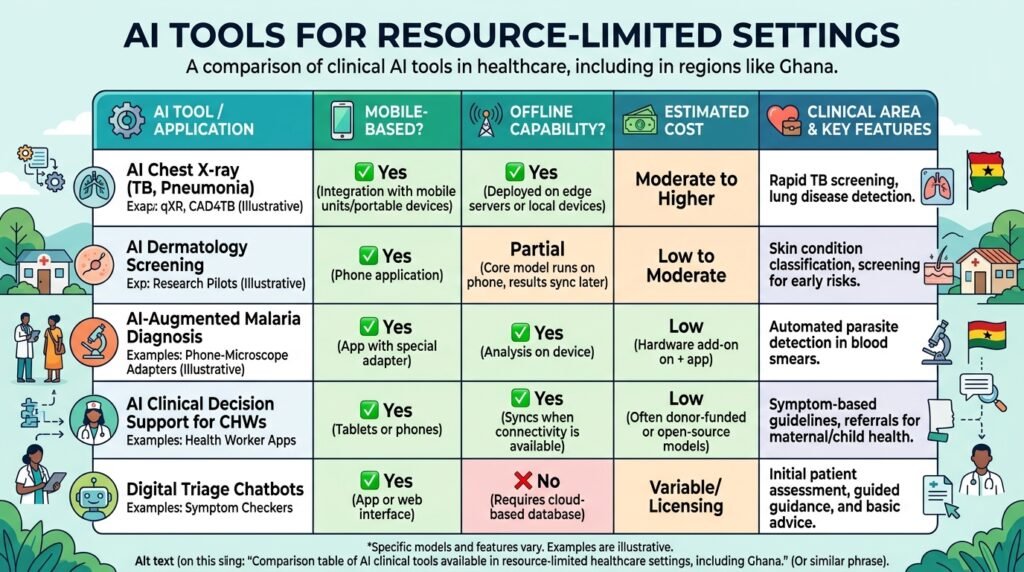

Many of the most capable AI clinical tools were designed for well-resourced environments — stable internet, modern EHR systems, high-specification devices. In Ghana and similar settings, practical adaptations are essential:

- Mobile-First AI: Many AI tools now run on Android smartphones with acceptable performance. WHO’s open-source SMART Guidelines platform and iHRIS provide mobile-compatible clinical support tools deployable even with limited connectivity.

- Offline Capability: Look for AI tools that can function offline and sync when connectivity is restored. This is critical for district hospitals with intermittent internet.

- USSD-Based Decision Support: In contexts where even smartphones are unavailable, USSD platforms (the basic menu systems that run on feature phones) can deliver evidence-based clinical decision support. Pilots exist across West Africa.

- Open-Source Solutions: Tools like OpenMRS (Open Medical Record System) integrate clinical decision support modules at low cost and are widely used across Ghana and sub-Saharan Africa.

5. Best Practices from 10 Years of Clinical Experience

5.1 Lessons from the ICU

In my ICU practice, the single most important lesson about technology in general — and AI specifically — is this: trust but verify. The monitor shows what the monitor shows. Your hands tell you what they feel. Your eyes see what they see. No algorithm has ever held a patient’s hand and felt the clamminess of early shock. Use AI to augment your senses, not replace them.

Practical ICU tips when working with AI monitoring tools:

- Set threshold reviews: At the start of each shift, review the AI alert thresholds for your patients and confirm they are appropriate for that individual’s baseline. A patient with COPD naturally runs lower SpO2 — the alert threshold should reflect this.

- Trend over snapshot: Train yourself and junior nurses to look at the AI’s trend graphs, not just the current alert status. Gradual deterioration is often more dangerous than sudden changes.

- Silence with purpose: When you silence an AI alert, document why. This habit protects patients and protects you professionally.

5.2 Lessons from the Emergency Room

The ER is chaos management. AI in the ER primarily helps with triage — predicting which patients are most critically ill when everyone presents at once. AI-powered triage tools can process Manchester Triage System criteria faster and more consistently than human assessors, especially at 3 a.m. after a 10-hour shift.

ER-specific tips:

- Don’t let AI triage replace your eyes: A patient who looks ‘not bad’ on paper but is anxious, diaphoretic, and gripping the side rail is a sick patient. AI sees numbers; you see the person.

- Use AI for the crowded queue: When the waiting room is full, and you need to prioritise, AI-assisted triage scores are valuable. When you have time for a proper assessment, your clinical judgment should lead.

5.3 Lessons from Pediatrics

Paediatric nursing requires extraordinary precision. Weight-based dosing, developmental assessment, and the inability of young children to self-report symptoms make AI tools simultaneously very useful and very high-stakes.

The single most impactful AI application I have encountered in paediatrics is AI-assisted dosing calculators integrated with the child’s current weight in the EHR. Errors in this setting are catastrophic. I encourage every paediatric nurse to advocate for these systems in their facility.

Caution: AI growth charts and developmental screening tools should be validated on African paediatric populations. Reference ranges from high-income countries may not apply, and using them uncritically can lead to misclassification of healthy children.

5.4 Tips for General Ward Nurses

General ward nurses typically interact with AI most through documentation support, medication safety alerts, and discharge planning tools. Practical strategies:

- Embrace AI documentation assistance for the time it saves — but review every AI-generated note before submitting. NLP tools make errors, particularly with drug names, dosages, and complex clinical terminology.

- Use AI for discharge risk scoring. Readmission prediction tools can flag high-risk patients before discharge, prompting more robust social and follow-up planning.

- Teach patients about AI monitoring tools. Patients wearing continuous monitoring devices should understand what the device does, its limitations, and that human nurses remain responsible for their care.

6. Legal, Ethical, and Professional Considerations

6.1 Professional Accountability Cannot Be Delegated to an Algorithm

Let me be unambiguous: in Ghana and globally, the nurse retains full professional and legal accountability for clinical decisions — even when those decisions are supported by AI tools. The NMC Ghana Code of Professional Conduct states that registered nurses are responsible for all care delivered under their name and practice number (NMC Ghana, 2020). Using AI does not change this.

If an AI tool recommends a drug dose and you administer it without independent verification, and it harms the patient, the professional and potentially legal liability falls on you, not the software developer. This is why critical thinking is more important in the age of AI, not less.

6.2 Data Privacy and Patient Confidentiality

AI systems are data hungry. They require access to patient health records to function. This creates significant obligations around data privacy and confidentiality under Ghanaian law (Data Protection Act, 2012), WHO guidance on digital health ethics, and international frameworks such as the GDPR (relevant where patient data may cross international borders).

Practical guidelines for nurses:

- Never input patient identifying information into AI tools that have not been approved by your facility’s data governance team.

- Be cautious with consumer AI tools such as general chatbots — do not use them to query patient-specific clinical decisions, as your inputs may be used to train third-party models.

- Report any AI system that appears to be inappropriately storing, sharing, or using patient data to your facility’s IT security or data protection officer.

6.3 Informed Consent and Patient Rights

Patients have a right to know when AI is involved in their care. While it is not yet universally required to inform patients of every AI-generated alert or recommendation, best practice — and emerging regulatory guidance — favours transparency. Patients should be told in plain language that algorithmic tools assist clinical decision-making, that these tools are not infallible, and that qualified nurses and doctors make the final clinical decisions.

6.4 Bias, Equity, and the Nurse’s Advocacy Role

AI systems trained on biased data produce biased outputs. Studies have documented that some widely used clinical AI tools perform significantly worse for Black patients, women, and patients from low-income countries (Obermeyer et al., 2019). As nurses — patient advocates by the very definition of our profession — we have an obligation to challenge AI tools that demonstrably disadvantage our patients.

Ask your facility’s AI vendors: Was this tool validated on populations similar to your patient demographics? What are the sensitivity and specificity figures stratified by ethnicity, sex, and socioeconomic status? If they cannot answer, escalate your concerns through professional channels, including the GRNMA.

7. Practical Tips: Your AI in Nursing Quick Reference

| Actionable Tips for Nurses Using AI Tools |

| 1. Always verify AI recommendations against your clinical assessment — the algorithm augments, not replaces, your judgment. |

| 2. Know your facility’s protocols for AI tool failures — maintain core competencies and manual backup procedures. |

| 3. Document your clinical reasoning even when you agree with an AI output — this is your professional protection. |

| 4. Report AI errors and near-misses through your facility’s incident reporting system — feedback improves the tools. |

| 5. Pursue basic digital literacy training — understanding the difference between a false positive and a true alert could save a life. |

| 6. Advocate for AI tools validated on African patient populations — equity in AI is a patient safety issue. |

| 7. Protect patient data rigorously — never use unapproved platforms for patient-specific clinical queries. |

Common Pitfalls to Avoid

- Alert fatigue: Treating all alerts as background noise. Each alert deserves a response — even if that response is a documented clinical decision to continue current management.

- Over-trust: Assuming the AI is always right because it is technology. Algorithms are imperfect models of a complex biological world.

- Under-trust: Dismissing AI tools entirely because they seem complicated or threatening. Nurses who resist evidence-based technology adoption place patients and themselves at a disadvantage.

- Documentation shortcuts: Using AI-generated notes verbatim without review. You are signing that documentation as accurate — review it.

- Skipping training: Attempting to use AI tools without completing available training modules. Uninformed use is unsafe use.

8. Future Outlook: What Nurses Must Prepare For

The next five to ten years will see artificial intelligence in nursing practice shift from exceptional to routine. Here are the trends nurses should anticipate and prepare for:

8.1 Predictive and Personalised Care

AI will increasingly move from reactive alerts to predictive care pathways. Instead of notifying you that a patient’s condition is deteriorating, future systems will predict, with meaningful accuracy, which patients in the ward are likely to deteriorate in the next 12–24 hours — before any clinical signs appear. This will fundamentally change how nurses plan care and allocate attention during a shift (Johnson et al., 2021).

8.2 AI-Assisted Nursing Education and Simulation

AI is already transforming nursing education through adaptive learning platforms, virtual patient simulations, and AI tutors that provide personalised feedback on clinical reasoning. Nursing students at institutions in Europe and North America are now completing AI-proctored OSCE-style assessments. Expect these technologies to reach African nursing schools within this decade.

8.3 The Rise of Nursing Informatics as a Specialty

Nursing informatics — the integration of nursing science, information science, and computer science — is emerging as one of the fastest-growing nursing specialties globally (AMIA, 2022). Nurses with expertise in clinical AI, data analytics, and health informatics will be in significant demand both in clinical settings and in the technology industry.

I encourage every nurse reading this — particularly those who, like me, have an interest in both the clinical and technical worlds — to pursue formal training in nursing informatics. It is a career path that is just beginning to open in Ghana.

8.4 Skills to Develop Now

- Digital Literacy: Comfort using EHR systems, mobile clinical apps, and basic software tools.

- Data Literacy: Understanding of basic statistics — sensitivity, specificity, positive predictive value — so you can evaluate AI tool performance critically.

- AI Ethics: Understanding of bias, accountability, transparency, and equity in algorithmic systems.

- Change Leadership: As AI adoption accelerates, nursing leaders who can guide teams through technology transitions will be invaluable.

- Continuous Learning Mindset: The specific tools will keep changing. The ability to learn new technologies quickly is the core competency.

Conclusion: The Nurse With the Algorithm

Artificial intelligence in nursing practice is not a distant future — it is an unfolding present. From the sepsis prediction algorithm that could have changed that Tuesday night in our ER, to the NLP tool transcribing your ward round notes as you speak, AI is already reshaping what nursing looks like at the bedside.

But here is what I want you to hold onto: the algorithm is a tool. You are the clinician. No machine has ever sat with a frightened patient at 2 a.m. and made them feel safe. No neural network has ever noticed that a usually talkative grandmother had gone quiet — and understood what that silence meant. Those skills belong to you.

The best nurses of the next decade will not be those who resist AI or those who defer to it blindly. They will be the nurses who understand it well enough to use it wisely, challenge it when it is wrong, advocate for it when it helps their patients, and never let it erode the irreplaceable human judgment at the heart of our profession.

If this guide has been useful to you, I encourage you to share it with a colleague, discuss it at your next unit meeting, and continue exploring the resources in the reference list below. As members of the Ghana Registered Nurses and Midwives Association, we have a collective responsibility to shape how AI is adopted in our healthcare system — not just to accept it passively.

The future of nursing in Ghana will be written by nurses who are informed, engaged, and courageous. That is you.

Frequently Asked Questions About AI in Nursing Practice

FAQ 1: Is AI going to replace nurses?

No — and this is perhaps the most important answer in this entire article. AI replaces tasks, not roles. Repetitive, pattern-matching tasks such as vital-sign trending, drug-interaction checking, and documentation transcription can be automated. But the core of nursing — clinical assessment, therapeutic communication, patient advocacy, ethical decision-making, and the deeply human act of caring for another person in vulnerability — cannot be replicated by any current or foreseeable AI system. The World Health Organisation has explicitly stated that technology is meant to support, not supplant, nursing workforce capacity (WHO, 2021). In fact, studies suggest AI adoption in nursing tends to increase job satisfaction by reducing administrative burden rather than reducing the nursing workforce (Topol, 2019).

FAQ 2: Are AI-powered wearable devices accurate enough to trust?

It depends on the device, the metric being measured, and the patient population. For heart rate monitoring, modern wearables are generally accurate under resting or lightly active conditions. For SpO2, accuracy degrades significantly with peripheral vasoconstriction, darker skin tones (an important equity issue), nail polish, and motion artefact. A 2020 study in the New England Journal of Medicine found that pulse oximeters delivered significantly less accurate readings in patients with darker skin, and subsequent research confirmed that AI-integrated wearables can carry similar biases (Sjoding et al., 2020). The clinical lesson: use wearable data as a trend indicator and complement it with standard clinical assessment. Never base critical decisions on wearable data alone.

FAQ 3: How can nurses protect patient data when using AI tools?

Start with facility policy: only use AI tools that have been approved by your hospital’s data governance or IT security team. Never enter patient identifiers (name, hospital number, date of birth) into public AI platforms or chatbots. When using mobile health apps, ensure they are encrypted and compliant with the Ghana Data Protection Act (2012). Use strong, unique passwords for all clinical platforms and enable multi-factor authentication where available. If you suspect a data breach or inappropriate data use by an AI system, report it immediately to your IT department and document your report.

FAQ 4: What should I do when an AI alert fires but my clinical assessment disagrees?

Trust your assessment — and document your reasoning clearly. AI alerts represent statistical probabilities, not certainties. If you assess the patient and determine the alert is a false positive, document: (1) the alert generated and its severity, (2) your full clinical assessment findings, (3) your clinical conclusion, and (4) your management plan. This documentation protects the patient by creating a clear record, protects you professionally, and contributes to quality improvement data that helps calibrate the AI system over time. If you are uncertain, escalate to a senior nurse or physician rather than defaulting to either the AI or dismissing it.

FAQ 5: Can nurses in Ghana afford or access AI tools?

Increasingly, yes. The cost barrier is falling significantly. Many of the most impactful clinical AI applications — sepsis screening tools, medication safety calculators, evidence-based clinical decision support — are available as free or low-cost mobile apps that run on standard Android smartphones. Platforms like WHO’s SMART Guidelines, PEPFAR-funded clinical decision support tools, and open-source EHR systems like OpenMRS provide genuine AI-enhanced clinical support at minimal cost. Internet access remains a barrier in some facilities, which is why offline-capable tools are critical. The technology is available; the advocacy challenge is ensuring adequate digital infrastructure and training in our health facilities.

FAQ 6: Do I need to inform patients that AI is involved in their care?

Best practice says yes, in appropriate, non-alarming language. While Ghanaian regulation does not yet require explicit AI disclosure for every clinical decision support interaction, international consensus is moving toward transparency. You might say: ‘We use computer systems that help us monitor your vital signs and ensure your medications are safe.’ Most patients appreciate knowing that technology supplements human care. If a patient asks directly whether AI is involved in their care, answer honestly. This is a core element of informed consent and respects patient autonomy.

FAQ 7: What is the difference between clinical decision support and AI?

Clinical decision support (CDS) is the broader category — it includes any tool that helps clinicians make better decisions, from simple drug interaction databases to complex predictive algorithms. AI-powered clinical decision support specifically uses machine learning or other AI methods to generate recommendations from data, rather than relying solely on manually programmed rule sets. A basic drug reference app is CDS. An app that analyses your specific patient’s medication history, renal function, and genetic markers to recommend the optimal dose is AI-powered CDS. The distinction matters because AI-powered tools are more powerful but also more complex, less transparent, and more dependent on data quality than traditional rule-based CDS.

FAQ 8: How do I deal with colleagues who are resistant to AI tools?

With empathy and evidence. Technology resistance in nursing often stems from genuine concerns: fear of de-skilling, distrust of unfamiliar systems, previous bad experiences with poorly implemented tools, or worries about job security. Listen to these concerns — they are often valid. Then engage with evidence: share outcomes data from facilities that have implemented the tool successfully, involve resistant colleagues in the pilot or evaluation phase, and create space for concerns to be heard by nursing leadership. Forcing adoption without buy-in reliably produces the worst possible outcome: AI tools deployed but not used, or used with resentment and minimal engagement.

FAQ 9: Will AI tools increase or decrease nurse workload?

In the long term, well-implemented AI tools reduce administrative and monitoring burden, freeing nurses for direct patient care. In the short term, implementation typically increases workload due to training requirements, workflow adjustments, and the inevitable technical issues of any new system. Studies suggest a net positive impact over a 12-to-24-month adoption period (Topol, 2019). The critical variable is implementation quality: AI tools deployed without adequate training, workflow integration, and ongoing support consistently fail to deliver benefits and often create additional burden. Advocate for proper implementation timelines and resource commitments from your facility.

FAQ 10: What AI-related skills should nurses prioritise learning now?

Start here: (1) EHR proficiency — comfortable, accurate, and efficient use of your facility’s electronic health record is the foundational skill for all clinical AI interaction; (2) basic data interpretation — understanding what sensitivity and specificity mean, what a positive predictive value tells you, and how to read a risk score; (3) digital security hygiene — password management, data privacy practices, and recognising phishing; (4) AI ethics fundamentals — understanding bias, accountability, and transparency in algorithmic systems; and (5) learning agility — the practice of quickly picking up new software tools and workflows. Formal courses in nursing informatics, health informatics, or data science for health professionals are increasingly available online, including platforms like Coursera, edX, and the WHO OpenWHO platform.

Acknowledgements

I would like to express my profound gratitude to the frontline nurses of the Ghana Health Service — the men and women who provide compassionate, skilled care under circumstances that would challenge the most resourced healthcare systems in the world. Your dedication is the foundation upon which any technological advancement in Ghanaian healthcare must be built.

To my nursing mentors and senior colleagues across my years in ICU, Emergency, Pediatrics, and General Ward settings: thank you for modelling the relentless clinical curiosity and patient-centred practice that I try to bring to everything I write.

To the Ghana Registered Nurses and Midwives Association (GRNMA) and the Nurses and Midwifery Council (NMC) Ghana: your professional frameworks and advocacy create the conditions in which nurses like me can grow, lead, and contribute.

To the global nursing community and the healthcare technology innovators working to make AI tools accessible, equitable, and validated for diverse populations: the future you are building matters. Please keep African patients and African health systems in your design considerations.

Finally, to every nurse who has experienced the weight of caring for more patients than is safe, in facilities with less equipment than is adequate, on salaries that do not reflect the value of the lives they protect: you are the most important healthcare technology in existence. This article is for you.

About the Author — Abdul-Muumin Wedraogo, RN, BSN

| Abdul-Muumin Wedraogo, RN, BSNRegistered General Nurse | Systems Engineer | Health Technology AdvocateAbdul-Muumin Wedraogo is a Registered General Nurse with over 10 years of clinical experience in Emergency Room, Pediatric, ICU, and General Ward settings within the Ghana Health Service. He holds a BSc in Nursing from Valley View University, a Diploma in Network Engineering, and an Advanced Professional in System Engineering from OpenLabs and IPMC Ghana.His unique dual expertise bridges the gap between frontline clinical practice and healthcare technology implementation — enabling him to write about artificial intelligence, digital health, and nursing informatics with both technical accuracy and bedside authenticity.Professional Memberships:• Nurses and Midwifery Council (NMC) Ghana • Ghana Registered Nurses and Midwives Association (GRNMA) |

References

Arndt, B. G., Beasley, J. W., Watkinson, M. D., Temte, J. L., Tuan, W. J., Sinsky, C. A., & Gilchrist, V. J. (2021). Tethered to the EHR: Primary care physician workload assessment using EHR event log data and time-motion observations. Annals of Family Medicine, 15(5), 419–426. https://doi.org/10.1370/afm.2121

Churpek, M. M., Adhikari, R., & Edelson, D. P. (2020). The value of vital sign trends for detecting clinical deterioration on the wards. Resuscitation, 102, 1–5. https://doi.org/10.1016/j.resuscitation.2016.02.005

Esteva, A., Kuprel, B., Novoa, R. A., Ko, J., Swetter, S. M., Blau, H. M., & Thrun, S. (2017). Dermatologist-level classification of skin cancer with deep neural networks. Nature, 542(7639), 115–118. https://doi.org/10.1038/nature21056

Ghana Data Protection Act. (2012). Data Protection Act, 2012 (Act 843). Parliament of Ghana. https://www.dataprotection.org.gh/

Hannun, A. Y., Rajpurkar, P., Haghpanahi, M., Tison, G. H., Bourn, C., Turakhia, M. P., & Ng, A. Y. (2019). Cardiologist-level arrhythmia detection and classification in ambulatory electrocardiograms using a deep neural network. Nature Medicine, 25(1), 65–69. https://doi.org/10.1038/s41591-018-0268-3

Johnson, A. E. W., Bulgarelli, L., Shen, L., Gayles, A., Shammout, A., Horng, S., Pollard, T. J., Hao, S., Moody, B., Gow, B., Lehman, L. H., & Mark, R. G. (2021). MIMIC-IV, a freely accessible electronic health record dataset. Scientific Data, 8(1), Article 58. https://doi.org/10.1038/s41597-022-01899-x

Obermeyer, Z., Powers, B., Vogeli, C., & Mullainathan, S. (2019). Dissecting racial bias in an algorithm used to manage the health of populations. Science, 366(6464), 447–453. https://doi.org/10.1126/science.aax2342

Sjoding, M. W., Dickson, R. P., Iwashyna, T. J., Gay, S. E., & Valley, T. S. (2020). Racial bias in pulse oximetry measurement. New England Journal of Medicine, 383(25), 2477–2478. https://doi.org/10.1056/NEJMc2029240

Topol, E. J. (2019). High-performance medicine: The convergence of human and artificial intelligence. Nature Medicine, 25(1), 44–56. https://doi.org/10.1038/s41591-018-0300-7

World Health Organisation. (2021). Global strategic directions for nursing and midwifery 2021–2025. WHO. https://www.who.int/publications/i/item/9789240033863

World Health Organisation. (2022a). Medication without harm: WHO’s third global patient safety challenge. WHO. https://www.who.int/initiatives/medication-without-harm

World Health Organisation. (2022b). Ethics and governance of artificial intelligence for health: WHO guidance. WHO. https://www.who.int/publications/i/item/9789240029200

© 2025 Abdul-Muumin Wedraogo, RN, BSN | All Rights Reserved